Pokémon Go’s 30 Billion Photos Are Teaching Delivery Robots How to Navigate

TechFlow Selected TechFlow Selected

Pokémon Go’s 30 Billion Photos Are Teaching Delivery Robots How to Navigate

From catching Pikachu to delivering pizza—this may be one of the most unexpected commercialization paths for crowdsourced data.

Author: Will Douglas Heaven

Compiled by: TechFlow

TechFlow Intro: Niantic has turned 30 billion city photos taken by Pokémon Go players into a new business. Its AI subsidiary, Niantic Spatial, trained a visual positioning system on this data—achieving centimeter-level accuracy, far surpassing GPS performance in urban canyons. Its first major customer? Coco Robotics, a food-delivery robot company. From catching Pikachu to delivering pizza—this may be one of the most unexpected commercialization paths for crowdsourced data.

Full article below:

Pokémon Go is the world’s first breakout AR game. Launched in 2016 by Niantic—a Google spinoff—the game overlays augmented reality onto the Pokémon IP and rapidly swept the globe. From Chicago to Oslo to Enoshima, players flooded the streets, hoping to catch a Jigglypuff, a Squirtle, or (if exceptionally lucky) an ultra-rare Galarian Zapdos—floating just above the real world, tantalizingly out of reach.

In short, this meant hundreds of millions of people pointing their phones at countless buildings. “Five hundred million people installed the app within 60 days,” says Brian McClendon, CTO of Niantic Spatial—the AI company Niantic spun off last May. According to Scopely, the game publisher that acquired Pokémon Go from Niantic around the same time, the game still had over 100 million active players in 2024—eight years after launch.

Now, Niantic Spatial is leveraging this unparalleled crowdsourced data trove—billions of city landmark photos captured by Pokémon Go players worldwide, each tagged with ultra-precise location metadata—to build a World Model. This is a hot technical direction today, aiming to anchor LLM intelligence within real-world environments.

The company’s latest product is a model capable of pinpointing your location on a map to within a few centimeters—using just a few snapshots of buildings or other landmarks. They aim to use it to help robots navigate more precisely where GPS is unreliable.

As the technology’s first large-scale validation, Niantic Spatial has just partnered with Coco Robotics—a startup deploying last-mile food-delivery robots across multiple U.S. and European cities. “Everyone thinks AR is the future—AR glasses are coming,” says McClendon. “But robots got there first.”

From Pikachu to Pizza Delivery

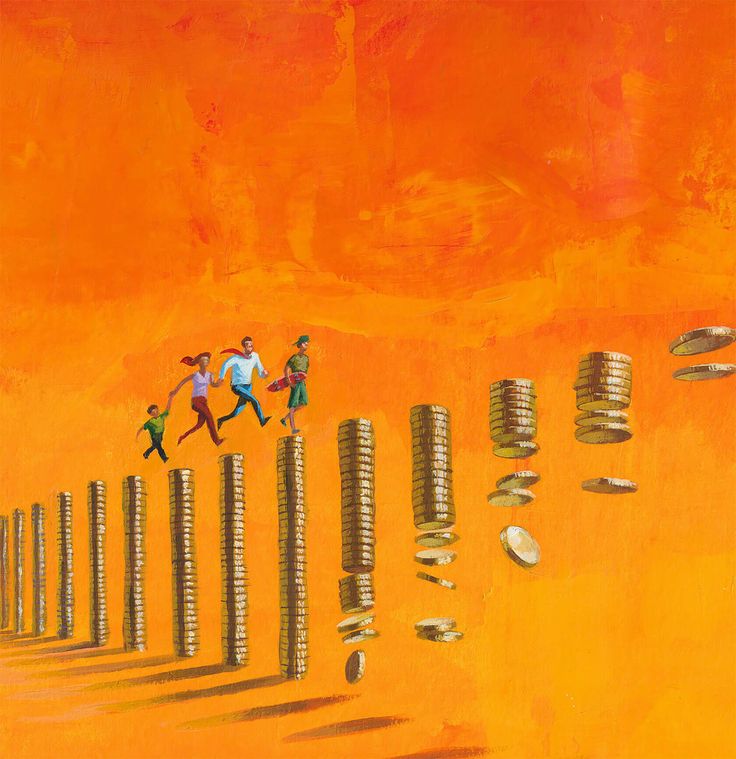

Coco Robotics deploys roughly 1,000 suitcase-sized robots in Los Angeles, Chicago, Jersey City, Miami, and Helsinki—each capable of carrying up to eight extra-large pizzas or four grocery bags. According to CEO Zach Rash, these robots have completed over half a million deliveries and traveled millions of miles across all weather conditions.

Yet to compete with human riders, Coco’s robots—which travel at about 5 mph on sidewalks—must be highly reliable. “Our best work happens when we arrive exactly on time, right when we say we will,” says Rash. That means no getting lost.

Coco’s challenge is its inability to rely on GPS. In cities, radio signals bounce between buildings and interfere with one another, weakening GPS reception. “We make deliveries in many dense areas with high-rises, underground tunnels, and overpasses—places where GPS simply never works well,” says Rash.

“Urban canyons are where GPS performs worst globally,” says McClendon. “That blue dot on your phone often drifts by 50 meters—placing you in another neighborhood, facing the wrong direction, or even on the opposite side of the street.” This is precisely the problem Niantic Spatial aims to solve.

Over the past few years, Niantic Spatial has been organizing data generated by Pokémon Go and Ingress players—Niantic’s earlier mobile AR game launched in 2013—to build a Visual Positioning System (VPS)—determining your location based on what you see. “Making Pikachu run realistically down the street and enabling Coco’s robots to navigate cities safely and precisely are fundamentally the same problem,” says John Hanke, CEO of Niantic Spatial.

“Visual positioning isn’t new,” says Konrad Wenzel of ESRI, a digital mapping and geospatial analytics firm. “But clearly, the more cameras out there, the better it works.”

Niantic Spatial trained its model on 30 billion images captured in urban environments. These images are especially dense around “hotspots”—key locations in Niantic games where players are encouraged to gather, such as Pokémon Gyms. “We have over one million locations worldwide where we can pinpoint your position,” says McClendon. “We know exactly where you’re standing—within a few centimeters. Even more importantly, we know which direction you’re facing.”

The result? For each of those one million locations, Niantic Spatial holds thousands of photos taken from nearly identical positions—but from different angles, at different times of day, and under varying weather conditions. Each photo comes with rich metadata: the phone’s exact spatial position, orientation, pose, whether it was moving, speed, and direction.

The company trained its model on this dataset to predict its precise location based solely on “what it sees”—even outside those one million hotspots, where image and location data are comparatively sparse.

In addition to GPS, Coco’s robots—which carry four cameras—now also use this model to determine where they are and where they need to go. Mounted at hip height and facing in all directions, the robots’ cameras offer a field of view somewhat different from that of Pokémon Go players—but Rash says adapting the data wasn’t complex.

Competitors also use visual positioning systems. For example, Starship Technologies—a robot delivery company founded in Estonia in 2014—says its robots build 3D maps of surroundings using sensors, marking building edges and streetlight positions.

But Rash is betting that Niantic Spatial’s technology gives Coco a competitive edge. He believes it will enable robots to stop precisely at the correct pickup spot outside restaurants—without obstructing anyone—and park directly at customers’ doorsteps rather than several steps short—a situation that previously occurred frequently.

The Cambrian Explosion of Robots

Niantic Spatial originally built its visual positioning system for augmented reality, says Hanke. “If you’re wearing AR glasses and want the virtual world locked to your line of sight, you need some way to achieve that. But now we’re witnessing a Cambrian explosion in robotics.”

Some robots must share space with humans—on construction sites and sidewalks. “For robots to integrate into such environments without disturbing people, they need spatial understanding comparable to ours,” says Hanke. “When a robot gets bumped or jostled, we help it precisely relocalize itself.”

The partnership with Coco Robotics is only the beginning. Hanke says Niantic Spatial is assembling the first components of what he calls a “Living Map”—an ultra-high-precision virtual-world simulation that evolves alongside the physical world. As Coco’s and other companies’ robots traverse the globe, they’ll generate fresh map data, making the digital twin increasingly detailed.

To Hanke and McClendon, maps aren’t just becoming more precise—they’re increasingly used by machines. This changes the very purpose of maps. Maps have long helped humans locate themselves. From 2D to 3D to 4D (think real-time simulations like digital twins), the underlying principle remains unchanged: points on the map correspond to points in space—or time.

But maps designed for machines may need to resemble tour guides, packed with information humans take for granted. Niantic Spatial and firms like ESRI aim to enrich maps with descriptive annotations—telling machines exactly what they’re seeing, labeling every object with a suite of attributes. “The task of our era is to build useful world descriptions for machines,” says Hanke. “The data we possess is an excellent starting point for understanding how the world’s interconnected systems operate.”

World models are extremely hot right now—and Niantic Spatial knows it. LLMs appear to know everything but lack common sense when interpreting and interacting with everyday environments. World models aim to fix that. Some companies—including Google DeepMind and World Labs—are developing models that instantly generate virtual fantasy worlds, then use them as training grounds for AI agents.

Niantic Spatial says it tackles the problem from a different angle. “If you push mapping far enough, you ultimately capture everything,” says McClendon. “We’re not there yet—but that’s where we’re headed. Right now, I’m intensely focused on reconstructing the real world.”

Join TechFlow official community to stay tuned

Telegram:https://t.me/TechFlowDaily

X (Twitter):https://x.com/TechFlowPost

X (Twitter) EN:https://x.com/BlockFlow_News