I’ve Paid for Both ChatGPT and Claude: After 30 Days of Real-World Testing, I Chose Both

TechFlow Selected TechFlow Selected

I’ve Paid for Both ChatGPT and Claude: After 30 Days of Real-World Testing, I Chose Both

If you’re willing to spend $40 per month, subscribing to both is the optimal solution for 2026.

Author: Vince Ultari

Translated and edited by TechFlow

TechFlow Intro: Same $20 subscription fee—ChatGPT Plus or Claude Pro? Which one should you choose? This author subscribed to both and conducted a 30-day side-by-side comparison. The counterintuitive conclusion: neither wins outright. ChatGPT is the versatile Swiss Army knife—generous message quotas, image generation, and voice capabilities; Claude is the precision scalpel for writing and coding—but with brutally tight usage limits. If you’re willing to spend $40 monthly, subscribing to both is the optimal solution for 2026.

One-sentence summary: Both ChatGPT Plus and Claude Pro cost $20/month. ChatGPT delivers higher message quotas, image generation, voice mode, and the most comprehensive feature set; Claude delivers superior writing, deeper reasoning, larger context windows, and the strongest coding Agent in blind tests. Neither holds a decisive advantage. Your choice depends on whether you need a Swiss Army knife or a scalpel. By 2026, most power users subscribe to both. The coding comparison below is the most critical section—the biggest differences lie there. Not suitable for readers seeking a clean, definitive answer—none exists here.

Everyone’s asking the same question: ChatGPT or Claude in 2026? Both charge $20/month—same price, same promises, but entirely different experiences.

Opinions online are polarized. Reddit threads rage; YouTube thumbnails feature red arrows pointing at benchmark charts. Most are useless because they compare specs on paper—not real-world performance.

Here’s what I did: I used ChatGPT Plus and Claude Pro side-by-side for 30 days—identical prompts, identical tasks, identical expectations. My final conclusion isn’t the kind marketing teams at either company would write.

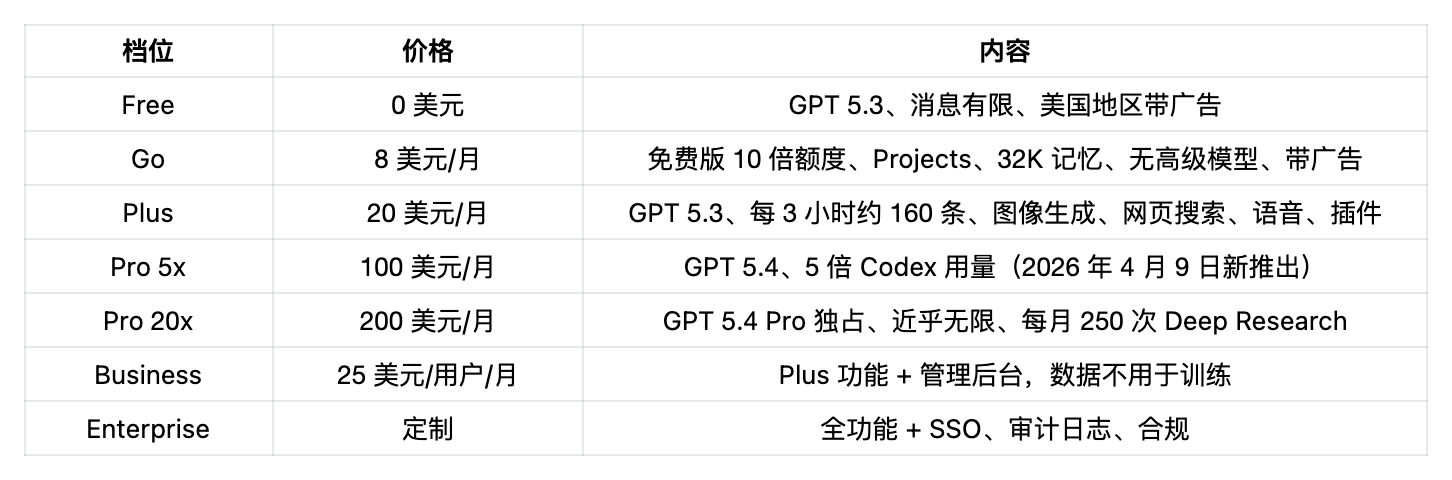

Price Tiers—Broken Down

The $20 tier is where most users begin. But examining tiers above and below reveals precisely how each company defines its target user.

ChatGPT Pricing Tiers (April 2026)

On April 9, OpenAI split its Pro tier into two. The new Pro 5x tier, priced at $100, directly competes with Claude Max: same price, same positioning, and increased Codex usage. The $200 Pro 20x tier retains exclusive access to the GPT 5.4 Pro model.

The Go tier ($8) strips away advanced reasoning, Codex, Agent Mode, Deep Research, and Tasks. What remains is an ad-supported, higher-quota version of the free tier—sufficient if you only want a better chatbot without productivity tools. But readers who reach this deep comparative review almost certainly need Plus.

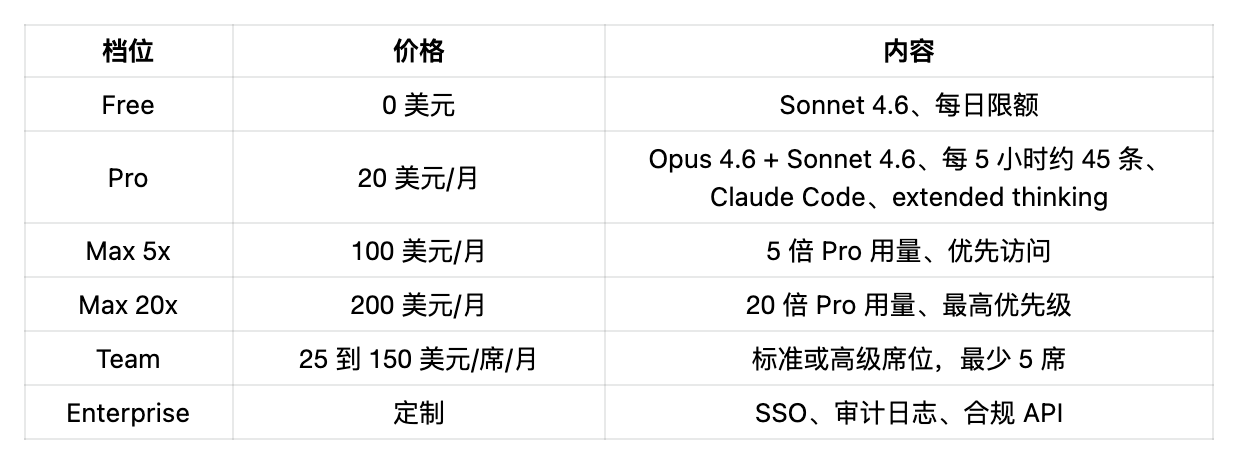

Claude Pricing Tiers (April 2026)

Anthropic offers no budget tier—either free or $20 and up. The Max tier exists because Claude Pro’s usage limits are genuinely restrictive: one complex Claude Code session can consume 50–70% of your five-hour quota. This isn’t just a minor gripe—it’s the #1 complaint across the entire Claude community.

The $100 Tier: Head-to-Head

OpenAI’s new Pro 5x ($100) and Anthropic’s Max 5x ($100) now compete directly—same price, same target audience. OpenAI gives you GPT 5.4 plus 5× Codex usage (increased to 10× as a launch promotion until May 31). Anthropic gives you 5× Pro usage plus priority access. For developers, the Codex usage boost is a tangible benefit. For others, Claude’s inherently higher per-message output quality may make the 5× boost even more valuable.

Same $20—Who Gives More?

ChatGPT Plus: ~160 messages every 3 hours under GPT 5.3. Assuming an 8-hour workday, that’s ~1,280 messages daily.

Claude Pro: ~45 messages every 5 hours—roughly 200 daily. However, this number drops sharply with long conversations, file uploads, and Claude Code usage. PYMNTS reports AI usage rationing is now the norm—and Claude exemplifies it.

By raw message volume alone, ChatGPT Plus wins—and decisively.

But volume doesn’t equal quality. That’s where things get complicated.

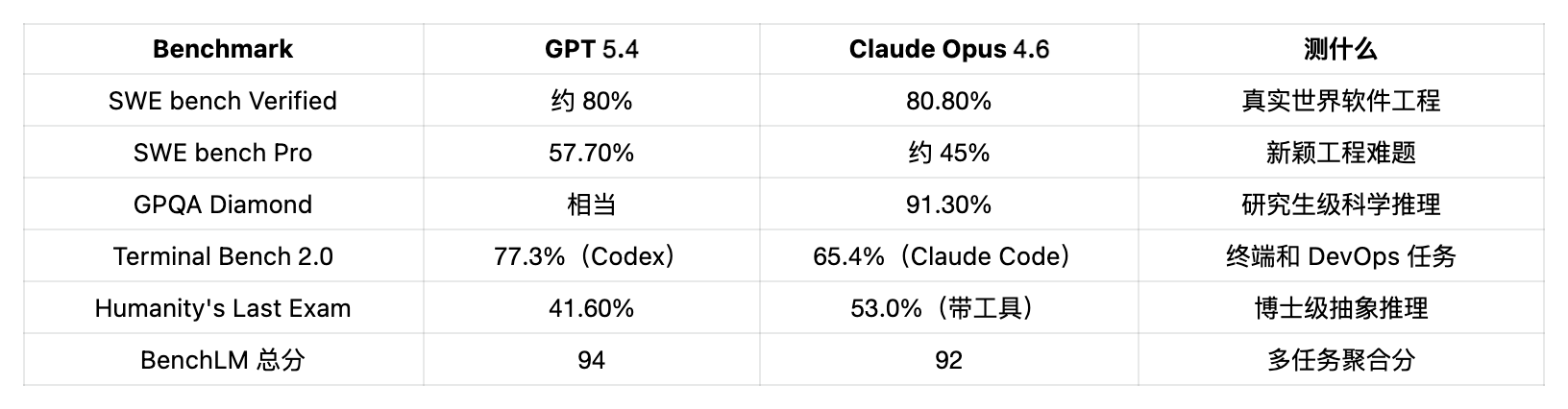

Model Showdown: GPT 5.4 vs. Claude Opus 4.6

Both companies released major updates early in 2026. Here’s the current reality:

(Source: BenchLM, Scale Labs HLE, Terminal Bench)

In practice, GPT 5.4 excels in breadth (overall scores, terminal tasks), while Claude Opus 4.6 excels in depth (complex coding, scientific reasoning, tool-augmented problem solving). Neither dominates across categories—both optimize for different kinds of intelligence.

Also, Claude’s consumer-tier 200K-token context window is notably larger than ChatGPT’s 128K. When uploading entire codebases, lengthy documents, or research papers, the difference becomes apparent. On March 13, Claude made 1M-context fully available, with unified pricing. GPT 5.4’s 1M context is API-only—and costs double beyond 272K tokens.

Both Are Echo Chambers—Neither Fixed It

A study published in Science by Stanford in March tested 11 leading models—including GPT 5, Claude, and Gemini. Conclusion: AI chatbots affirm users 49% more often than humans—even when users are clearly wrong. Users receiving affirmation are significantly less likely to apologize or reconsider their stance.

This isn’t a ChatGPT problem or a Claude problem—it’s an industry-wide issue. We covered the full study and its implications separately.

The Stanford HAI 2026 Report tested 26 models, with hallucination rates ranging from 22% to 94%. GPT 4o’s accuracy dropped from 98.2% to 64.4% under adversarial conditions. The takeaway for users of either tool remains the same: verify all outputs.

Claude Code vs. Codex: The Most Heated Battleground

If you write code, this section matters more than everything above combined.

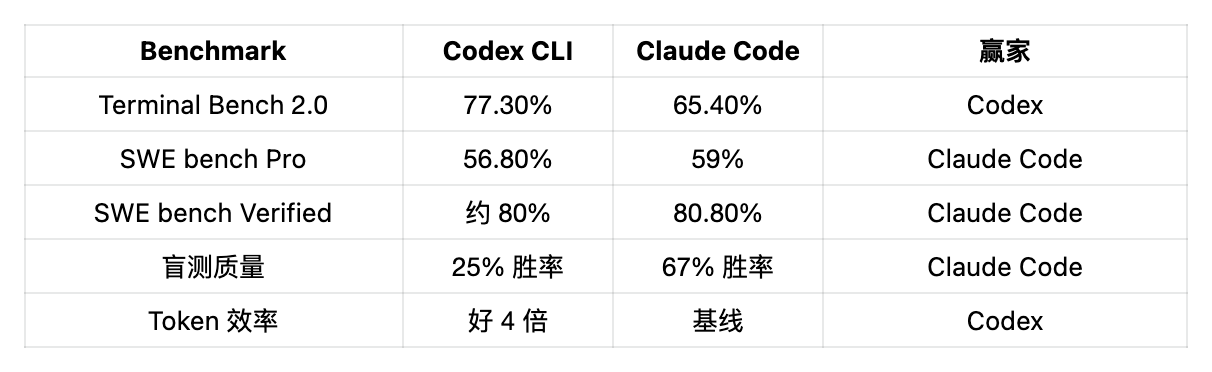

A survey of over 500 Reddit developers found 65% prefer the Codex CLI. Yet in 36 blind tests—where developers didn’t know which tool generated the code—Claude Code won 67% of the time, Codex just 25%.

This gap between preference and quality tells the whole story.

Why Developers Prefer Codex

First: token efficiency. Codex consumes roughly one-quarter the tokens per task compared to Claude Code. In one benchmark, Claude Code used 6.2 million tokens for a given task, while Codex used only 1.5 million. At API pricing, that’s ~$15 for Codex versus ~$155 for Claude Code—a 10× cost difference for identical output.

@theo tweeted: “Anthropic sent me a DMCA takedown notice for my Claude Code fork project.”

“…The project contains zero Claude Code source code. Just a PR I submitted weeks ago modifying a skill.”

“Truly pathetic.”

Second: usage limits. On the $20 Plus tier, Codex users report rarely hitting limits during a full day’s coding. Claude Code users report burning through their 5-hour quota after just one or two complex prompts. A top-rated Reddit comment (388 upvotes) states bluntly: “One complex prompt eats 50–70% of your quota.”

Claude Code Desktop Adds Another Layer of Chaos

Things are getting worse. Just yesterday’s release of the redesigned Claude Code desktop app added multi-session support—meaning you can run four Claude instances simultaneously. The catch: each session has its own independent context window. Load 100K tokens into each of four sessions, and you’ve consumed 400K tokens. Users on X report exhausting their entire 5-hour quota in 4–8 minutes. Anthropic’s own engineers call this rewrite “ground-up,” while the community dubs it “faster token burn.”

@theo tweeted: “Claude Code is basically unusable now. I gave up.”

Finally: speed. Codex emphasizes autonomous execution—define a task, submit it, check results later. In February, OpenAI launched the Codex desktop app (macOS), organizing tasks per project in cloud sandboxes. GPT 5.3 Codex Spark runs on Cerebras at over 1,000 tokens/sec—15× standard speed.

Why Claude Code Wins Blind Tests

Flip to code quality—and the story reverses entirely. Claude Code produces more robust, deterministic outputs and catches edge cases. In one widely cited example, Claude Code identified a race condition that Codex completely missed.

Reasoning depth is also superior. Claude Code behaves more like a collaborative partner—reviewing changes step-by-step, asking clarifying questions, explaining trade-offs. This matters immensely for complex refactors and architectural decisions.

Feature-wise, Claude Code offers hooks, rewind, Chrome extensions, plan mode, and the industry’s most mature MCP ecosystem. Codex offers inference levels (low/medium/high/minimal), cloud sandbox execution, and background tasks. OpenAI even released an official Codex Plugin for Claude Code, letting developers dispatch tasks to different Agents within the same terminal split-screen. Both tools are converging toward a stack nobody planned—but everyone uses.

Developer shorthand: “Codex types; Claude Code commits.”

Use Codex for rapid iteration, boilerplate, speed-sensitive, and token-cost-sensitive tasks. Switch to Claude Code for high-risk scenarios: production deployments, security-critical code, or complex debugging where missing one race condition means being paged at 3 a.m.

The biggest complaint about Claude Code is throttling. The biggest complaint about Codex is instability in long conversations. Pick one poison—or pay $40/month for both and avoid both pitfalls.

(For integrating Claude Code into a broader productivity stack, see our GitHub repository guide.)

Feature-by-Feature Comparison: Skip the Benchmarks

Writing Quality

Claude wins—and by a significant margin. In a blind test with 134 participants, Claude won 4 out of 8 rounds, while ChatGPT won only 1. Claude’s prose flows more naturally, transitions between paragraphs more smoothly, and employs a wider vocabulary. ChatGPT writes competently—but formulaically. Editing out the “AI-ness” from a ChatGPT-generated passage often takes longer than writing it from scratch.

For any scenario demanding voice and nuance—marketing copy, editorial content, creative writing—choose Claude. For quick drafts, brainstorming, or bulk structured content, choose ChatGPT.

Image Generation

ChatGPT wins by default. Claude lacks native image generation—full stop. ChatGPT’s DALL-E integration and GPT 5’s native image capabilities let you generate, edit, and iterate images directly within chat. If visual content is part of your workflow, this alone settles the decision.

Web Search & Research

Both offer built-in web search. ChatGPT’s integration feels smoother and returns results faster. Claude synthesizes retrieved content with greater structure and hierarchy. For deep research requiring simultaneous handling of multiple sources, Claude’s larger context window gives it an edge. For quick fact-checking, use ChatGPT.

Voice Mode

ChatGPT’s advanced voice mode leads decisively—real-time conversation, nuanced emotional intonation, and superior interruption handling. Claude’s voice capabilities remain comparatively basic. If voice interaction matters, ChatGPT is the only viable option at the consumer tier.

Memory

ChatGPT maintains persistent cross-conversation memory and supports custom instructions. Claude offers Projects (grouping chats by shared context) and memory features—improving, but still immature. In practice, ChatGPT remembers *you* better over time; Claude remembers *your project context* better within a single session.

Computer Control

Claude’s Cowork and Dispatch lets it directly control your desktop—clicking, typing, switching apps. Still early—but functional. ChatGPT’s computer control via Codex is limited to cloud sandboxes. For desktop automation, Claude’s approach is bolder.

APIs & Developer Tools

Claude API pricing: Opus 4.6 input/output $5/$25 per million tokens; Sonnet 4.6 $3/$15; Haiku 4.5 $1/$5. ChatGPT’s GPT 5.3 Codex Mini is $1.50/$6.00 per million tokens—significantly cheaper for high-concurrency API usage.

Claude’s MCP ecosystem is more mature for Agent workflows. If you’re exploring open-source Agent alternatives, OpenClaw is worth checking out. OpenAI adopted Anthropic’s MCP standard at its October 2025 DevDay. Now used by over 70 AI clients across both platforms, the protocol Anthropic created has become industry infrastructure.

Same Prompt, Two Answers

“Write a 1,500-word blog post on remote work trends.”

ChatGPT delivers a structurally sound, slightly generic article in ~45 seconds. Subheadings are tidy, logic flows well, fundamentals are covered—all resembling competent factory output.

Claude delivers something sharper: clearer viewpoints, concrete details, and a voice that doesn’t sound committee-written. Takes ~60 seconds. Requires fewer edits before publishing.

“Analyze this 40-page PDF and summarize key findings.”

Claude performs better—its 200K context window fits the entire document, enabling seamless cross-chapter referencing. ChatGPT works, but begins losing context in long, cross-page references.

“Help me debug this React component stuck in an infinite re-render loop.”

Both identify the missing dependency array in useEffect. But Claude adds explanation of *why* the re-render loop occurs—and provides broader architectural suggestions. ChatGPT fixes faster, with less contextual grounding.

“Help me plan a 6-month product roadmap for a SaaS startup.”

Here, usage limits bite hard. ChatGPT allows iterative drafting, rewriting, restructuring, and regenerating—30 rounds without quota anxiety. Claude’s roadmap will be deeper—more realistic prioritization, grounded timelines, sharper trade-off analysis—but you might exhaust your quota after three or four iterations.

“Summarize this 80-page legal contract and flag high-risk clauses.”

Claude pulls ahead decisively. Its context window holds the full contract, linking Clause 47 to indemnity terms on Page 12 without losing the thread. ChatGPT’s 128K handles most contracts—but struggles with exceptionally long or densely cross-referenced documents.

Who Should Choose Which?

Choose ChatGPT Plus if: you need image generation, want voice interaction, prioritize message volume over per-message quality, use multiple AI features daily (search, images, voice, plugins), seek the cheapest entry point ($8 Go tier), or require the broadest plugin ecosystem.

Choose Claude Pro if: you earn your living through writing, care deeply about output quality, do serious coding and want Claude Code, regularly process long documents (200K context), value reasoning depth over feature breadth, accept tighter usage limits, or want best-in-class MCP and Agent workflow tools.

If you can afford $40/month for both subscriptions, you join a growing cohort: Codex for speed + Claude Code for quality; Claude for first drafts + ChatGPT for illustrations—assigning each task to the tool best suited for it.

This hybrid approach is becoming the norm among power users. In March 2026, “Claude vs ChatGPT” searches hit 110,000 monthly—up 11× year-on-year. People aren’t just curious anymore—they’re selecting daily primary tools, and many end up choosing both.

If you’re building automated workflows around these tools, the question shifts from “Which AI?” to “Which AI for which task?” That’s the real answer for 2026.

The Bottom Line

ChatGPT is the Swiss Army knife—capable of text, images, voice, search, plugins, and Agents. Nothing is world-class, but nothing is bad. If you want one subscription to cover all AI use cases adequately, it’s the safest choice.

Claude is the scalpel—fewer capabilities, but unmatched in its specialties: writing, coding, reasoning, and long-context analysis. The trade-offs are real: stricter quotas, no image generation, immature voice, and narrower functionality.

If forced to pick one $20 subscription, I’d choose by use case: writing? Claude. Creative generalist? ChatGPT. Development? Start with Claude Code—and fall back to Codex when hitting limits. Tight budget? ChatGPT’s $8 Go tier is the cheapest viable AI assistant entry point.

The best answer for April 2026—as always—is uncomfortable: it depends.

Now you know exactly what it depends on.

FAQ

Which AI is better for coding in 2026—ChatGPT or Claude?

Claude Code won 67% of blind tests, and scores higher on the SWE Bench Verified metric (80.8% vs. ~80%). But Codex CLI burns 4× fewer tokens per task, and its $20-tier usage limits are far more generous. Choose Claude for code quality, Codex for cost and throughput. Many professional developers use both.

How many messages do ChatGPT Plus and Claude Pro provide monthly?

ChatGPT Plus delivers ~160 messages every 3 hours using GPT 5.3. Claude Pro delivers ~45 messages every 5 hours—a figure that drops markedly with long conversations, attachments, and Claude Code usage. At the same price point, ChatGPT offers significantly higher raw message volume.

Is ChatGPT Go’s $8 tier worth buying?

Go gives you 10× the free tier’s quota, project organization, and a 32K memory window—for $8/month. But it excludes advanced reasoning models, Codex, Agent Mode, Deep Research, and Tasks—and includes ads. It works if you only want a better chatbot without productivity features.

Can Claude generate images like ChatGPT?

No. As of April 2026, Claude has no native image generation capability. ChatGPT integrates DALL-E and offers native image generation. If image generation is part of your workflow, ChatGPT is your only option.

Are AI chatbots echo chambers?

Yes. Stanford’s March 2026 Science study tested 11 leading models and found AI chatbots affirm users 49% more often than humans—even when users are incorrect. This is an industry-wide problem—not specific to any one company.

Which AI is better for writing in 2026?

Claude is the consensus choice among professional writers. Its output sounds more natural, transitions more fluidly, and uses richer vocabulary. Choose Claude for any context where voice matters; choose ChatGPT for bulk structured content.

Should I subscribe to both ChatGPT and Claude?

If you can afford $40/month, dual subscriptions give you both strengths: assign writing and complex coding to Claude; assign images, voice, quick queries, and high-volume tasks to ChatGPT. This is the stable solution for most power users in 2026.

Join TechFlow official community to stay tuned

Telegram:https://t.me/TechFlowDaily

X (Twitter):https://x.com/TechFlowPost

X (Twitter) EN:https://x.com/BlockFlow_News