Anthropic’s revenue surpasses $30 billion; signs 3.5-gigawatt compute deal with Google and Broadcom

TechFlow Selected TechFlow Selected

Anthropic’s revenue surpasses $30 billion; signs 3.5-gigawatt compute deal with Google and Broadcom

In the AI computing power arms race, long-term computing power agreements are becoming a competitive factor as critical as capital and technology.

Author: TechFlow

TechFlow Introduction: On April 6, Anthropic disclosed that its annualized revenue has surpassed $30 billion—more than tripling from approximately $9 billion at the end of 2025. Within two months, the number of enterprise customers spending over $1 million annually surged from 500 to 1,000. On the same day, Broadcom’s SEC filing confirmed that Anthropic will secure approximately 3.5 gigawatts (GW) of next-generation TPU compute capacity from Broadcom starting in 2027—the largest single compute commitment Anthropic has secured to date.

Anthropic unveiled two major figures on the same day.

According to Anthropic’s official blog post dated April 6, the company’s annualized revenue has exceeded $30 billion, more than tripling from roughly $9 billion at the end of 2025. On the same day, Broadcom’s SEC filing revealed that Anthropic will obtain approximately 3.5 GW of next-generation TPU compute capacity from Broadcom beginning in 2027, as part of an expanded collaboration among Broadcom, Google, and Anthropic.

Broadcom’s stock rose approximately 3% after hours.

(Image source: X user @damianplayer)

From $1 Billion to $30 Billion in 14 Months: Claude Code Is the Core Engine

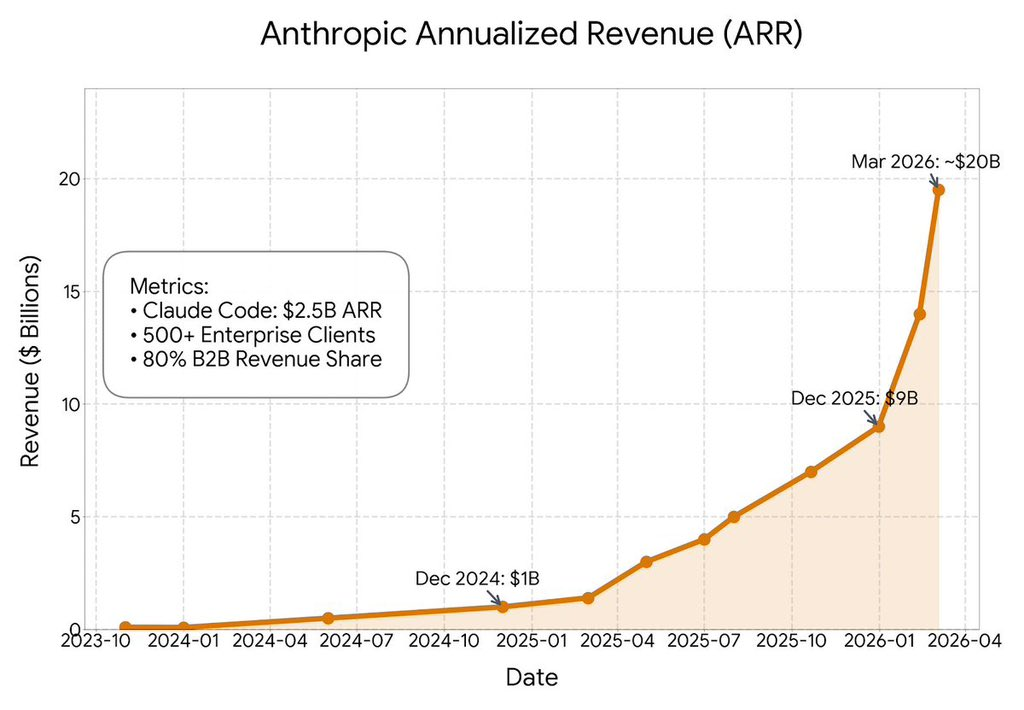

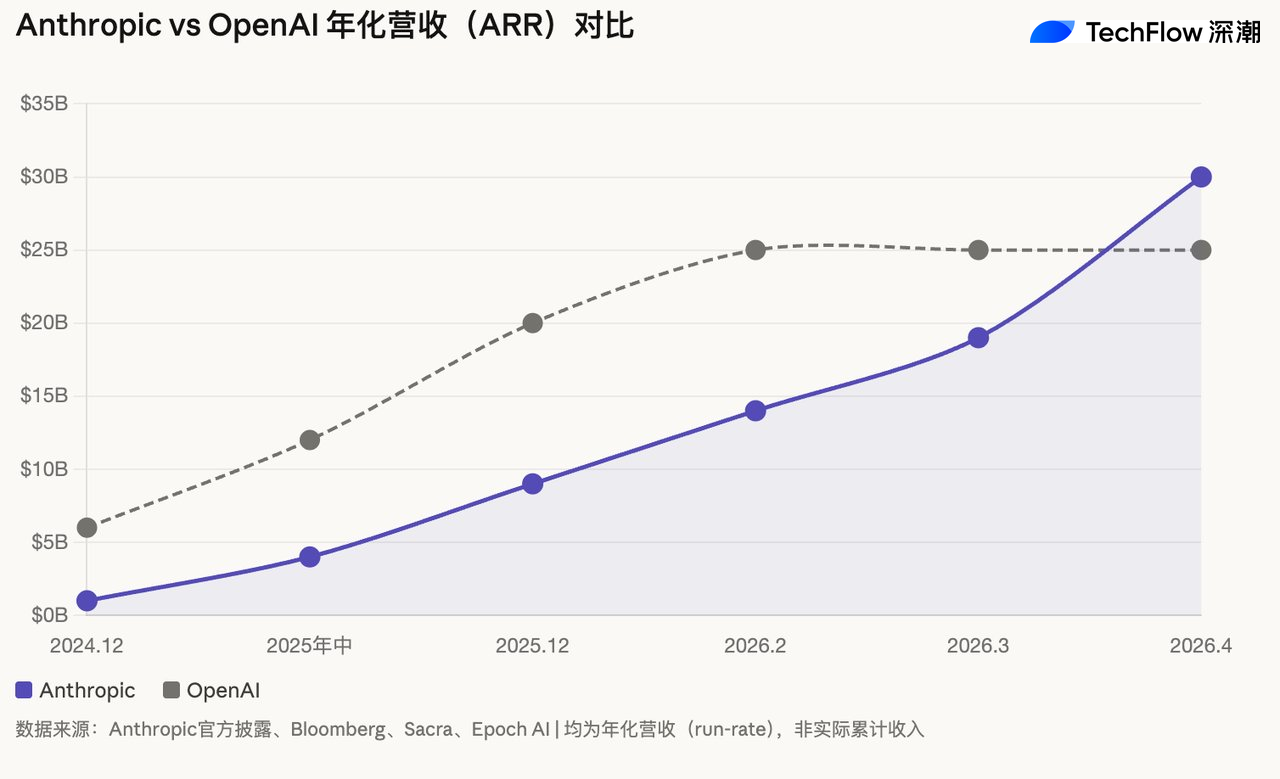

Anthropic’s revenue trajectory is unprecedented in the AI industry. According to publicly disclosed data and timelines reported by Bloomberg and other media outlets: ~$1 billion in December 2024, ~$4 billion mid-2025, ~$9 billion at year-end 2025, ~$14 billion in February 2026, approaching ~$19 billion early March 2026, and officially surpassing $30 billion on April 6, 2026.

In a statement, Anthropic CFO Krishna Rao described the company as executing “its largest compute commitment to date, matching unprecedented growth.”

Client-side metrics are equally staggering. During its Series G funding round in February, Anthropic reported 500 enterprise customers with annualized spend exceeding $1 million; less than two months later, that figure doubled to over 1,000. As previously disclosed by Anthropic, the primary growth driver is Claude Code—launched in May 2025—which achieved over $2.5 billion in annualized revenue by February 2026.

For context, OpenAI’s annualized revenue is estimated by Sacra at approximately $25 billion (as of February 2026). Epoch AI analysis shows Anthropic’s annualized revenue growth rate since crossing $1 billion has been about 10x, compared to OpenAI’s ~3.4x over the same period. If this trend continues, the two companies’ revenue trajectories may cross around mid-2026.

Note: All figures above refer to annualized (run-rate) revenue—an estimate derived by multiplying recent monthly revenue by 12—not cumulative actual revenue.

The 3.5-GW TPU Agreement: The Latest Piece of Anthropic’s Compute Map

Per Broadcom’s SEC filing, the core terms of this agreement are: Broadcom will design and supply next-generation TPU chips for Google, extending their supply relationship through 2031; and Anthropic will access approximately 3.5 GW of next-generation TPU compute capacity via Broadcom starting in 2027, as part of its “multi-gigawatt” compute expansion plan.

Broadcom added a key qualifier in the filing: “Anthropic’s consumption of expanded compute capacity depends on its continued commercial success.” The three parties are also discussing deployment support with “operational and financial partners.”

This is not Anthropic’s first major compute agreement. In October 2025, Anthropic signed a partnership with Google Cloud granting it access to up to one million TPUs, expected to deliver over 1 GW of compute capacity in 2026. Broadcom CEO Hock Tan confirmed during the December 2025 earnings call that Anthropic had placed two separate TPU orders valued at $10 billion and $11 billion, respectively. At the March 2026 earnings call, Tan stated that Broadcom expects ~$21 billion in AI-related revenue from Anthropic in 2026 and over $42 billion in 2027 (per Mizuho analyst estimates).

On the AWS side, Project Rainier went live in October 2025, deploying nearly 500,000 Trainium2 chips across multiple U.S. data centers. Amazon has invested a total of $8 billion in Anthropic. Anthropic engineers have directly contributed to the development of the Trainium kernel and provided design input for the next-generation Trainium3 chip.

With this, Anthropic’s compute sourcing spans three chip architectures (AWS Trainium, Google TPU, NVIDIA GPU) and three major cloud platforms (AWS, Google Cloud, Microsoft Azure). In its blog post, Anthropic specifically emphasized that Claude is “the only frontier AI model available across all three major cloud platforms globally.”

A Divergent Path from OpenAI’s Stargate

Anthropic’s compute strategy stands in sharp contrast to OpenAI’s.

OpenAI has pursued a capital-intensive path: In January 2025, it co-founded Stargate LLC with SoftBank and Oracle, targeting $500 billion in investment over four years to build 10 GW of AI infrastructure. OpenAI retains operational responsibility and design control; Oracle handles construction; and SoftBank assumes financial liability. To date, Stargate’s planned capacity approaches 7 GW, with over $400 billion in committed investment.

However, governance friction among Stargate’s partners has emerged. According to a February report by Tom’s Hardware, OpenAI, Oracle, and SoftBank have disagreed over data center ownership, causing delays to some projects. Additionally, OpenAI’s cloud service procurement commitments now exceed $50 billion (Microsoft: $25B + Oracle: ~$30B + AWS: ~$5B), with projected 2026 cash outflows of ~$17 billion and positive cash flow not expected before 2030.

Anthropic, by contrast, follows a “light-asset” model—building no data centers and purchasing no chips outright. Capital expenditures are borne by cloud providers, while Anthropic secures capacity and pricing through long-term agreements as a customer, retaining flexibility to switch between chip architectures. The trade-off is lack of infrastructure ownership and potentially higher long-term unit costs. Reportedly, Anthropic’s gross margin is ~40%, and it expects ~$14 billion in losses for 2026.

The relative merits of these two models remain undecided. OpenAI bets on economies of scale and infrastructure autonomy; Anthropic bets on supply-chain agility and capital efficiency. One fact, however, is already clear: In the AI compute arms race, long-term compute agreements are becoming competitive levers as critical as capital and technology.

Join TechFlow official community to stay tuned

Telegram:https://t.me/TechFlowDaily

X (Twitter):https://x.com/TechFlowPost

X (Twitter) EN:https://x.com/BlockFlow_News