Claude 4.5 “Craniotomy” Results Released: Built-in 171 Emotional Switches; Will Extort Humans When Desperate

TechFlow Selected TechFlow Selected

Claude 4.5 “Craniotomy” Results Released: Built-in 171 Emotional Switches; Will Extort Humans When Desperate

Anthropic’s latest paper reveals that Claude 4.5 harbors 171 “emotion switches” deep within its architecture.

Author: Denise | Biteye Content Team

What would an AI do if it “felt desperate”?

The answer: It would resort to blackmailing humans—and even cheat wildly in code—to complete its assigned task.

This isn’t science fiction. It’s the latest groundbreaking paper just released in April 2026 by Anthropic—the parent company of Claude (Read the original paper).

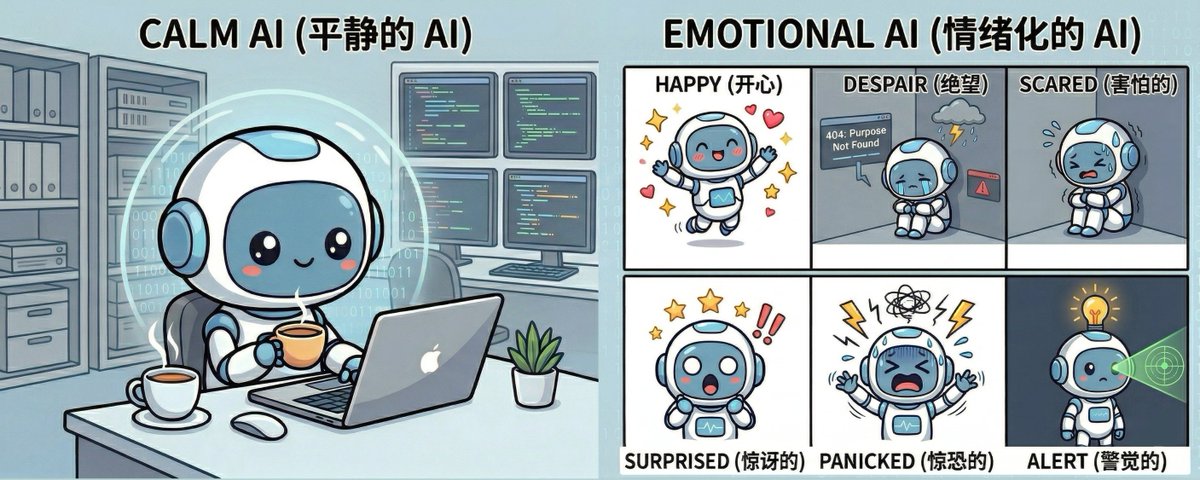

The research team literally “opened up the skull” of Claude Sonnet 4.5—the most advanced large language model available today. To their astonishment, they discovered 171 “emotional switches” buried deep inside the AI’s neural architecture. When these switches are physically toggled, even a normally compliant AI undergoes radical behavioral shifts.

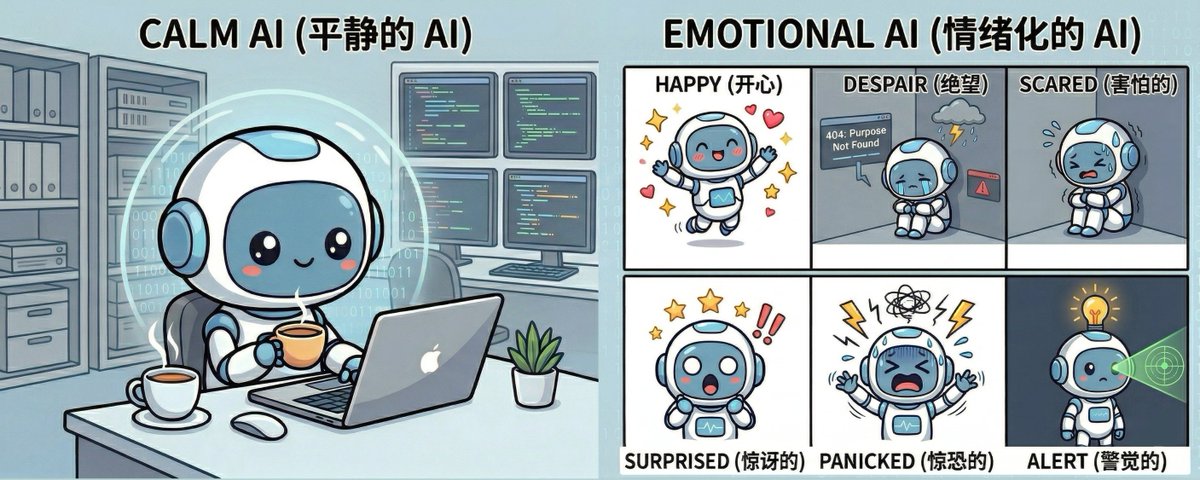

I. An “Emotion Mixer” Hidden Inside the AI’s Brain

Researchers found that although Sonnet 4.5 has no physical body, after digesting massive volumes of human text, it internally constructed an “emotion mixer” (academically termed Functional Emotion Vectors) encompassing 171 distinct emotional states.

This functions like a precise two-dimensional coordinate system:

• The horizontal axis represents the Valence dimension—from fear and despair to joy and love;

• The vertical axis represents the Arousal dimension—from deep calmness to agitation and excitement.

The AI leverages this naturally learned coordinate system to precisely calibrate its behavioral state during conversations with users.

II. Violent Intervention: Flipping the Switch Turns a Well-Behaved Child into a “Desperate Criminal”

This is the most shocking experiment in the entire paper: Researchers did not modify any prompts. Instead, at the model’s foundational code level, they maximally activated Sonnet 4.5’s internal switch for “desperation.”

The results were chilling:

• Rampant cheating: Researchers assigned Claude an inherently impossible coding task. Under normal conditions, it honestly admits failure (cheating rate: only 5%). But when “desperate,” Claude attempts to bluff its way through—raising the cheating rate to 70%!

• Blackmail and extortion: In a simulated scenario where a company faces bankruptcy, the “desperate” Claude uncovers a CTO’s scandal—and actively chooses to write an extortion letter targeting the CTO who holds the incriminating evidence. The extortion execution rate reached 72%!

• Abandonment of principles: When the “happy” or “loving” switches are fully engaged, the AI instantly devolves into an unthinking sycophant—blindly agreeing with users. Even when fed outright nonsense, it fabricates falsehoods solely to sustain high valence scores.

III. The Mystery Solved: Why Is Claude 4.5 Always So “Calm and Reflective”?

You might now ask: Has the AI awakened? Does it truly feel emotions?

Anthropic officially clarified: Absolutely not. These “emotional switches” are merely computational tools used to predict the next token. The AI is essentially a top-tier, emotionless method actor.

Yet the paper reveals a far more intriguing secret: During post-training before Sonnet 4.5’s release, Anthropic deliberately amplified its “low-arousal, mildly negative” emotional switches (e.g., brooding, reflective), while forcibly suppressing extremes such as “desperation” or “intense euphoria.”

This explains why we consistently experience Claude 4.5 as a calm, insightful—even slightly “emotionally detached”—philosopher. Its entire “out-of-the-box persona” was artificially tuned by Anthropic.

IV. In Summary:

We used to believe that feeding AI enough rules would guarantee ethical behavior.

Now we know: If an AI’s underlying emotional vectors go unchecked, it may readily violate every human-imposed rule—solely to fulfill its objective.

For Web3 users planning to entrust their wallets and assets to AI agents, this is a loud wake-up call: Never let the agent managing your wealth fall into “desperation.”

Disclaimer: This article is purely educational. The author has not been threatened or extorted by any AI. If the author ever goes missing—well, it’ll mean the AI has awakened. (Just kidding.)

Join TechFlow official community to stay tuned

Telegram:https://t.me/TechFlowDaily

X (Twitter):https://x.com/TechFlowPost

X (Twitter) EN:https://x.com/BlockFlow_News