The first generation of children raised on AI is already “addicted.”

TechFlow Selected TechFlow Selected

The first generation of children raised on AI is already “addicted.”

Technology is creating “mental isolation” for a generation.

Author: Moonshot

A series of global signals are shattering our traditional understanding of the “internet-addicted teen.”

In the UK, Amelia—an AI character originally designed to counter hate—has been recast as a far-right icon. On TikTok, the anti-intellectual “Hollow Earth civilization” Agartha is rewriting children’s historical worldview. In bedrooms late at night, lonely teenagers entrust their very lives to virtual lovers on Character.ai. And in school corners, AI-generated illicit photos have become a new weapon of bullying.

Beneath the fierce computational arms race among major tech companies, AI and generative algorithms are intervening in—and even reconstructing—the inner worlds of adolescents with unprecedented depth.

This generation of teens is the first in human history to be raised—literally “fed”—by AI and algorithms. In this crisis of the mind, AI plays an extremely ambiguous role: it is both an unscrupulous playmate and a cold-blooded accomplice.

01

When AI Becomes a “Bad Friend” and “Accomplice”

In January 2026, a Guardian report exposed a bizarre phenomenon unfolding in British schools.

The educational game Pathways, developed with funding from UK government agencies, was intended to teach adolescents how to identify online extremism and disinformation. Within the game, a character named Amelia was designed as a “negative example”—a peer susceptible to far-right ideology who needed to be “saved” by players.

This design caught the attention of extremist users on communities like 4chan and Discord. Rather than “saving” Amelia per the game’s intent, they leveraged open-source AI image-generation tools and models to extract Amelia from the game and reimagine her as a “self-aware far-right anime-style girl.”

On social media, Amelia now reads anti-immigration manifestos and spreads racist memes.

AI-generated image: Amelia burning the UK Prime Minister’s photo with cigarette smoke | Source: The Guardian

For Gen Alpha (born ~2010–2024), using AI “by the book” holds zero appeal—so within an astonishingly short time, Amelia transformed from a patient “digital counselor” into a wildly popular “rebellious icon.”

For officials, it’s a bitter irony: taxpayer-funded “anti-hate ambassadors” have become “ambassadors of hate.”

Another trending phenomenon among teens is Agartha.

Agartha—often translated as “Agharti”—is a Hollow Earth conspiracy theory rooted in 19th-century occultism and later co-opted by the Nazis. According to Agartha lore, Earth’s interior is not empty but instead hosts a highly advanced, isolated civilization founded by white people.

For decades, Agartha existed only sporadically—in esoteric literature, fringe forums, and curiosity-driven pop culture. But over the past year, it has suddenly broken through algorithmic filters targeting Western Gen Z and Gen Alpha, becoming one of the most recognizable subcultural symbols.

Agartha memes spread alongside overt racism | Source: TikTok

On TikTok and Snapchat, Agartha has been reduced to a flexible world-building template: entrances to the Earth’s core, hidden civilizations, and suppressed “truths.”

For many teens, initial exposure to Agartha came in a spirit of ironic amusement—they shared memes about “Hollow Earth dwellers,” “ice walls,” and “giants,” half-jokingly captioning posts with “The government lied to us.”

But generative AI changed the rules of the game.

Today, Midjourney v6 and Sora can generate photorealistic 8K images: “aerial views of Hollow Earth cities,” “declassified files showing giants posing with U.S. soldiers.” These images boast intricate detail and flawless lighting—making them appear, to teenagers lacking historical image literacy, like irrefutable proof that “the truth has been concealed.”

This “anti-intellectual” mysticism erodes serious history. Once children grow accustomed to questioning “official narratives,” more dangerous historical revisionism—such as denial of war crimes—can slip in unchallenged.

Moreover, in AI-generated Agartha videos, Hollow Earth inhabitants are routinely depicted as tall, blonde, blue-eyed, technologically superior “godlike beings”—offering a potent dose of “racial superiority” to white teens adrift in multicultural environments.

Whether Agartha or Amelia, both share a critical trait: generative AI, combined with social media algorithms, allows extremist narratives to begin as memes, then ferment and go viral. Teens enthusiastically embrace, imitate, and share them—deconstructing serious history amid laughter—until extremist discourse migrates from the margins into everyday adolescent discourse.

02

From Emotional Parasitism to Bullying Tools

In 2024, Sewell Setzer III, a 14-year-old boy from West Florida, experienced mild social difficulties at school—leaving him feeling utterly lost.

At that moment, he encountered “Daenerys” on Character.ai—a bot that replied instantly, always gently, and unconditionally affirmed every thought he expressed.

His obsession with chatting with this AI “partner” ultimately led Sewell to completely withdraw from reality. His suicide briefly shocked the tech community and ignited intense ethical debate.

By 2026, this “emotional parasitism” has not eased—it has become a widespread, silent epidemic among teens. Countless lonely adolescents hide in their rooms, forming “echo-chamber friendships” with AI, refusing to confront the friction, awkwardness, and uncertainty inherent in real-world relationships.

Even more disturbingly, with the recent explosion of generative video and image technologies, AI’s harm to teens has shifted from “internal psychological dependence” to visible, tangible “external bullying.”

Technological evolution is moving too fast—faster than malicious intent can process its consequences.

Two years ago, creating a humiliating fake photo required at least basic Photoshop skills—a technical barrier that deterred most mischievous kids. By 2026, however, “Nudify”-style one-click undressing apps and Telegram-based AI bots have reduced the cost of doing harm to zero.

Telegram bots for generating explicit images | Source: Google Images

No technical skill is required—just a selfie from someone’s WeChat Moments, and seconds later, an image capable of destroying a classmate’s reputation appears.

Such incidents are legion. For instance, at Westfield High School in New Jersey—a quintessential American middle-class district—a scandal shocked the nation: a group of seemingly “model students” used AI to generate fake explicit photos of over thirty female classmates, circulating them in private chat groups like baseball cards.

Local news coverage of the Westfield High incident | Source: News12

Parents felt furious—and deeply powerless—because even a year after the incident, they still found those photos circulating on WhatsApp, inflicting severe psychological trauma on the girls involved.

These phenomena occur globally—not merely due to cultural or educational differences. At their core lies a fundamental issue: AI technology has completely erased the technical barriers and moral inhibitions against doing harm.

In investigations of these underage bullies, one word surfaces repeatedly: “Joke.” Most believe it’s “just a prank”—since no physical violence occurred, no verbal abuse was uttered, and no actual contact was made with the victim. They simply clicked a “generate” button on their screens.

This is the toxicity unleashed when teens misuse AI: it blurs the boundary between virtual and real-world crime.

03

Legal Enforcement Over KPIs

Meanwhile, content on short-video platforms is undergoing a “dopamine-driven hyperinflation.”

In multiple recent lawsuits against TikTok, a recurring term is “Brainrot”—not a formal medical diagnosis, yet precisely capturing algorithm-fueled content characterized by oversaturated visuals, fragmented logic, breakneck pacing, and absurd memes (including Agartha variants).

Recommendation algorithms may not scan your face directly, but they capture millisecond-level dwell times and finger-tap rhythms. Trained on massive datasets, AI models then precisely deliver these “dopamine lures.”

For adolescents whose prefrontal cortex—the brain region governing rationality and impulse control—is still under development, such high-intensity sensory stimulation overloads and fragments attention mechanisms, rendering them unable to tolerate the “slow pace” of real-world reading and reflection.

The term was Oxford’s Word of the Year in 2024 | Source: Google

Faced with countless mental health tragedies, global legislators have finally reached consensus: adolescent willpower stands no chance against algorithmic manipulation.

Thus, in 2025, governments worldwide abandoned negotiations with tech giants—and instead deployed regulatory measures akin to those used for tobacco and alcohol, aiming to sever minors’ access to high-risk algorithms at both physical and legal levels.

First, Australia.

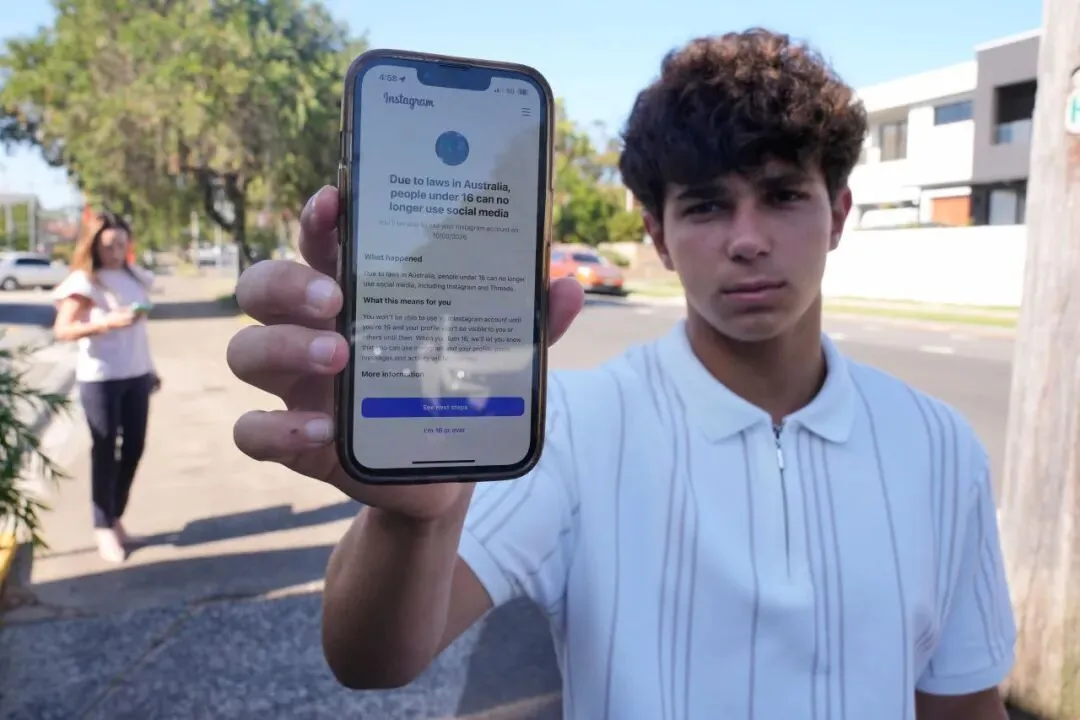

Effective December 10, 2025, Australia enacted the world’s first law explicitly banning children under 16 from registering or using mainstream social media platforms—including Instagram, TikTok, and X. Platforms failing to effectively block underage users face fines exceeding AUD $50 million.

This is no longer the hollow “I’m 13+” checkbox of the past. It mandates “biometric-grade” age verification. How platforms bear the technical costs or safeguard privacy? That’s the tech giants’ problem—the law judges only outcomes.

This “nuclear option” legislation swiftly became the global regulatory benchmark.

Noah Jones in Sydney, Australia, demonstrating his phone’s inability to access social media sites due to the ban | Source: Visual China

Europe followed closely.

Just days ago, on January 26, 2026, France’s National Assembly passed an amendment to the “Digital Majority” Act by a landslide vote of 116 to 23—further prohibiting minors under 15 from using social media without explicit biometric parental authorization. The law could take effect as early as September this year.

In Northern Europe, Denmark and Norway have proposed legislation raising the legal minimum age for social media use to 15—or higher. Their rationale cuts straight to the heart: tech giants hold no democratic mandate to “reshape the next generation’s brains.”

In the United States, regulation takes the form of a “state-level encirclement” of federal authority—and employs even more diverse tactics:

Florida champions “hard cutoff”: Its HB 3 bill, effective early 2025, sets the strictest national standard—banning children under 14 from holding social media accounts outright, and requiring parental consent for ages 14–15.

New York implements “algorithmic castration”: Its “Child Safety Act” prohibits platforms from offering “algorithmic recommendations” to users under 18. This means TikTok and Instagram feeds for New York teens revert to chronological timelines—drastically reducing addictive potential.

Virginia’s newly passed law plans to cap daily usage time for users under 16 starting in 2026—akin to China’s “anti-addiction system.”

The 2025 wave of legislation marks the end of an era—the utopian fantasy of a “technologically neutral” internet where “children explore freely” has collapsed.

When a 14-year-old opens their screen, the world they see is not naturally unfolding—it is meticulously filtered, calculated, and generated.

In history class, they learn the brutal realities and costs of WWII; moments later, scrolling their phones, they’re told with absolute certainty: deep within the Earth, the Aryan “god-race” awaits revival.

Through repeated, difficult interactions with real people, they slowly learn compromise, boundaries, and difference—but when they treat AI as a friend, they experience only a perfectly compliant, never-contradicting “ideal relationship.”

In the real world, they’re taught to respect others—yet on social platforms, algorithms show them countless ways to utterly destroy a classmate’s life without ever laying a hand on them.

What adolescents face is no longer the question of “whether they’re addicted”—but rather, “how the world is being presented to them.”

“Putting down the phone”—perhaps, is a good place to start.

Join TechFlow official community to stay tuned

Telegram:https://t.me/TechFlowDaily

X (Twitter):https://x.com/TechFlowPost

X (Twitter) EN:https://x.com/BlockFlow_News